Any Event That Follows a Response and Increases Its Probability of Occurring Again.

Chapter 8. Learning

8.2 Irresolute Behaviour through Reinforcement and Penalty: Operant Conditioning

Learning Objectives

- Outline the principles of operant conditioning.

- Explain how learning can be shaped through the apply of reinforcement schedules and secondary reinforcers.

In classical conditioning the organism learns to associate new stimuli with natural biological responses such as salivation or fearfulness. The organism does non learn something new but rather begins to perform an existing behaviour in the presence of a new indicate. Operant conditioning, on the other hand, is learning that occurs based on the consequences of behaviour and tin involve the learning of new actions. Operant conditioning occurs when a domestic dog rolls over on command considering it has been praised for doing and so in the by, when a schoolroom bully threatens his classmates considering doing so allows him to go his way, and when a child gets good grades because her parents threaten to punish her if she doesn't. In operant conditioning the organism learns from the consequences of its own actions.

How Reinforcement and Penalization Influence Behaviour: The Research of Thorndike and Skinner

Psychologist Edward L. Thorndike (1874-1949) was the first scientist to systematically study operant workout. In his research Thorndike (1898) observed cats who had been placed in a "puzzle box" from which they tried to escape ("Video Clip: Thorndike'due south Puzzle Box"). At outset the cats scratched, fleck, and swatted haphazardly, without whatsoever idea of how to go out. Just eventually, and accidentally, they pressed the lever that opened the door and exited to their prize, a chip of fish. The next fourth dimension the cat was constrained within the box, it attempted fewer of the ineffective responses before conveying out the successful escape, and after several trials the true cat learned to almost immediately make the correct response.

Observing these changes in the cats' behaviour led Thorndike to develop his law of consequence, the principle that responses that create a typically pleasant event in a particular situation are more likely to occur again in a like situation, whereas responses that produce a typically unpleasant consequence are less likely to occur over again in the situation (Thorndike, 1911). The essence of the law of effect is that successful responses, because they are pleasurable, are "stamped in" past feel and thus occur more frequently. Unsuccessful responses, which produce unpleasant experiences, are "stamped out" and afterward occur less ofttimes.

When Thorndike placed his cats in a puzzle box, he found that they learned to engage in the important escape behaviour faster subsequently each trial. Thorndike described the learning that follows reinforcement in terms of the law of event.

Watch: "Thorndike'due south Puzzle Box" [YouTube]: http://www.youtube.com/watch?v=BDujDOLre-viii

Watch: "Thorndike'due south Puzzle Box" [YouTube]: http://www.youtube.com/watch?v=BDujDOLre-viii

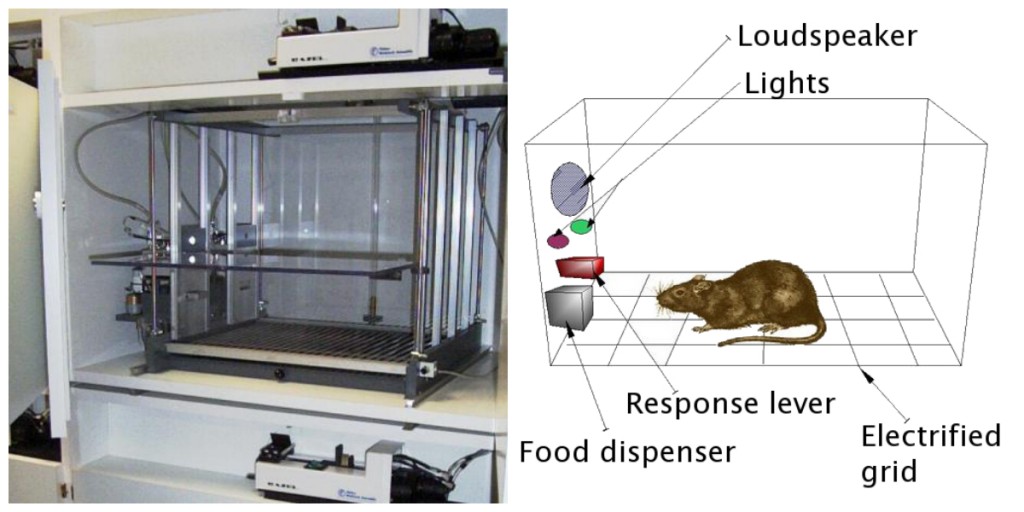

The influential behavioural psychologist B. F. Skinner (1904-1990) expanded on Thorndike'due south ideas to develop a more than consummate prepare of principles to explicate operant workout. Skinner created peculiarly designed environments known as operant chambers (usually chosen Skinner boxes) to systematically study learning. A Skinner box (operant sleeping room) is a structure that is big enough to fit a rodent or bird and that contains a bar or key that the organism can press or peck to release food or water. It also contains a device to record the animal'due south responses (Effigy 8.5).

The most bones of Skinner'due south experiments was quite similar to Thorndike's research with cats. A rat placed in the sleeping accommodation reacted as i might expect, scurrying most the box and sniffing and clawing at the floor and walls. Eventually the rat chanced upon a lever, which information technology pressed to release pellets of nutrient. The next fourth dimension around, the rat took a picayune less time to press the lever, and on successive trials, the time information technology took to printing the lever became shorter and shorter. Soon the rat was pressing the lever as fast as it could eat the nutrient that appeared. As predicted by the police of issue, the rat had learned to repeat the action that brought well-nigh the food and cease the actions that did not.

Skinner studied, in detail, how animals changed their behaviour through reinforcement and penalisation, and he developed terms that explained the processes of operant learning (Tabular array eight.one, "How Positive and Negative Reinforcement and Penalisation Influence Behaviour"). Skinner used the term reinforcerto refer to any effect that strengthens or increases the likelihood of a behaviour, and the term punisher to refer to whatsoever event that weakens or decreases the likelihood of a behaviour. And he used the terms positive and negative to refer to whether a reinforcement was presented or removed, respectively. Thus, positive reinforcement strengthens a response by presenting something pleasant afterwards the response, and negative reinforcement strengthens a response by reducing or removing something unpleasant. For case, giving a child praise for completing his homework represents positive reinforcement, whereas taking Aspirin to reduce the pain of a headache represents negative reinforcement. In both cases, the reinforcement makes information technology more likely that behaviour will occur again in the future.

| [Skip Table] | |||

| Operant conditioning term | Description | Outcome | Example |

|---|---|---|---|

| Positive reinforcement | Add or increment a pleasant stimulus | Behaviour is strengthened | Giving a student a prize afterwards he or she gets an A on a test |

| Negative reinforcement | Reduce or remove an unpleasant stimulus | Behaviour is strengthened | Taking painkillers that eliminate pain increases the likelihood that you will take painkillers again |

| Positive penalty | Present or add together an unpleasant stimulus | Behaviour is weakened | Giving a educatee extra homework after he or she misbehaves in class |

| Negative penalty | Reduce or remove a pleasant stimulus | Behaviour is weakened | Taking away a teen'due south estimator after he or she misses curfew |

Reinforcement, either positive or negative, works by increasing the likelihood of a behaviour. Punishment, on the other hand, refers to whatsoever event that weakens or reduces the likelihood of a behaviour. Positive punishmentweakens a response past presenting something unpleasant after the response, whereas negative punishmentweakens a response by reducing or removing something pleasant. A child who is grounded after fighting with a sibling (positive penalization) or who loses out on the opportunity to go to recess later on getting a poor grade (negative penalisation) is less probable to repeat these behaviours.

Although the distinction between reinforcement (which increases behaviour) and punishment (which decreases it) is normally clear, in some cases it is difficult to determine whether a reinforcer is positive or negative. On a hot twenty-four hour period a cool breeze could be seen as a positive reinforcer (because it brings in cool air) or a negative reinforcer (because information technology removes hot air). In other cases, reinforcement can be both positive and negative. 1 may smoke a cigarette both because information technology brings pleasure (positive reinforcement) and considering it eliminates the peckish for nicotine (negative reinforcement).

It is as well important to note that reinforcement and punishment are not simply opposites. The use of positive reinforcement in changing behaviour is almost ever more effective than using penalization. This is because positive reinforcement makes the person or fauna experience meliorate, helping create a positive relationship with the person providing the reinforcement. Types of positive reinforcement that are effective in everyday life include verbal praise or approving, the awarding of status or prestige, and directly financial payment. Punishment, on the other hand, is more than likely to create only temporary changes in behaviour because it is based on coercion and typically creates a negative and adversarial human relationship with the person providing the reinforcement. When the person who provides the punishment leaves the situation, the unwanted behaviour is likely to return.

Creating Complex Behaviours through Operant Conditioning

Perhaps you recollect watching a movie or existence at a show in which an animal — mayhap a dog, a horse, or a dolphin — did some pretty amazing things. The trainer gave a command and the dolphin swam to the bottom of the pool, picked upwardly a ring on its nose, jumped out of the water through a hoop in the air, dived again to the bottom of the pool, picked upwardly another band, so took both of the rings to the trainer at the edge of the pool. The animate being was trained to practise the trick, and the principles of operant conditioning were used to train information technology. But these complex behaviours are a far weep from the unproblematic stimulus-response relationships that we have considered thus far. How can reinforcement be used to create circuitous behaviours such every bit these?

One way to expand the utilize of operant learning is to change the schedule on which the reinforcement is applied. To this point nosotros take just discussed a continuous reinforcement schedule, in which the desired response is reinforced every time information technology occurs; whenever the domestic dog rolls over, for example, it gets a biscuit. Continuous reinforcement results in relatively fast learning but also rapid extinction of the desired behaviour once the reinforcer disappears. The trouble is that because the organism is used to receiving the reinforcement subsequently every behaviour, the responder may give up quickly when it doesn't announced.

Most real-world reinforcers are not continuous; they occur on a partial (or intermittent) reinforcement schedule — a schedule in which the responses are sometimes reinforced and sometimes not. In comparison to continuous reinforcement, fractional reinforcement schedules atomic number 82 to slower initial learning, but they also lead to greater resistance to extinction. Considering the reinforcement does not announced after every behaviour, it takes longer for the learner to make up one's mind that the reward is no longer coming, and thus extinction is slower. The iv types of partial reinforcement schedules are summarized in Table viii.two, "Reinforcement Schedules."

| [Skip Table] | ||

| Reinforcement schedule | Explanation | Real-globe case |

|---|---|---|

| Fixed-ratio | Behaviour is reinforced after a specific number of responses. | Manufactory workers who are paid according to the number of products they produce |

| Variable-ratio | Behaviour is reinforced later an average, but unpredictable, number of responses. | Payoffs from slot machines and other games of chance |

| Fixed-interval | Behaviour is reinforced for the commencement response subsequently a specific corporeality of fourth dimension has passed. | People who earn a monthly bacon |

| Variable-interval | Behaviour is reinforced for the first response later on an boilerplate, just unpredictable, corporeality of time has passed. | Person who checks email for letters |

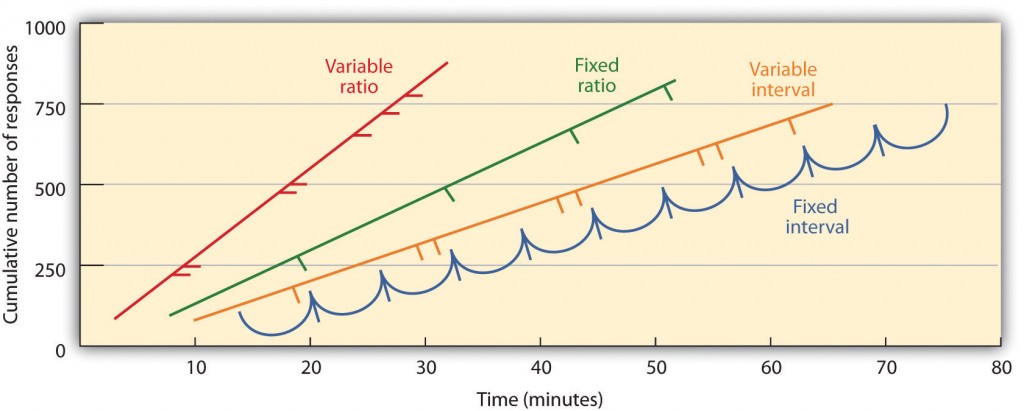

Partial reinforcement schedules are adamant past whether the reinforcement is presented on the basis of the fourth dimension that elapses betwixt reinforcement (interval) or on the basis of the number of responses that the organism engages in (ratio), and by whether the reinforcement occurs on a regular (stock-still) or unpredictable (variable) schedule. In a stock-still-interval schedule, reinforcement occurs for the first response made later on a specific amount of fourth dimension has passed. For example, on a 1-minute fixed-interval schedule the animal receives a reinforcement every minute, bold it engages in the behaviour at least once during the minute. Every bit y'all can see in Figure 8.6, "Examples of Response Patterns by Animals Trained nether Different Partial Reinforcement Schedules," animals under fixed-interval schedules tend to boring downward their responding immediately afterwards the reinforcement but then increase the behaviour over again as the time of the next reinforcement gets closer. (Nearly students study for exams the same way.) In a variable-interval schedule, the reinforcers appear on an interval schedule, but the timing is varied around the boilerplate interval, making the actual appearance of the reinforcer unpredictable. An instance might exist checking your email: you are reinforced past receiving messages that come, on average, say, every xxx minutes, only the reinforcement occurs merely at random times. Interval reinforcement schedules tend to produce dull and steady rates of responding.

In a fixed-ratio schedule, a behaviour is reinforced after a specific number of responses. For instance, a rat's behaviour may be reinforced after it has pressed a primal 20 times, or a salesperson may receive a bonus later on he or she has sold 10 products. As you tin see in Figure 8.6, "Examples of Response Patterns by Animals Trained under Different Partial Reinforcement Schedules," one time the organism has learned to act in accordance with the fixed-ratio schedule, it volition pause merely briefly when reinforcement occurs before returning to a high level of responsiveness. A variable-ratio scheduleprovides reinforcers afterwards a specific but boilerplate number of responses. Winning money from slot machines or on a lottery ticket is an example of reinforcement that occurs on a variable-ratio schedule. For case, a slot car (see Figure 8.7, "Slot Automobile") may be programmed to provide a win every 20 times the user pulls the handle, on boilerplate. Ratio schedules tend to produce high rates of responding because reinforcement increases equally the number of responses increases.

Circuitous behaviours are besides created through shaping, the process of guiding an organism'south behaviour to the desired outcome through the utilize of successive approximation to a final desired behaviour. Skinner made extensive employ of this process in his boxes. For instance, he could train a rat to press a bar ii times to receive food, by showtime providing food when the beast moved near the bar. When that behaviour had been learned, Skinner would begin to provide food simply when the rat touched the bar. Further shaping limited the reinforcement to but when the rat pressed the bar, to when information technology pressed the bar and touched it a 2d time, and finally to simply when it pressed the bar twice. Although it can accept a long fourth dimension, in this fashion operant conditioning can create chains of behaviours that are reinforced only when they are completed.

Reinforcing animals if they correctly discriminate betwixt similar stimuli allows scientists to test the animals' ability to larn, and the discriminations that they tin can make are sometimes remarkable. Pigeons accept been trained to distinguish betwixt images of Charlie Brown and the other Peanuts characters (Cerella, 1980), and betwixt different styles of music and fine art (Porter & Neuringer, 1984; Watanabe, Sakamoto & Wakita, 1995).

Behaviours tin as well be trained through the apply of secondary reinforcers. Whereas a main reinforcer includes stimuli that are naturally preferred or enjoyed by the organism, such every bit food, water, and relief from pain, a secondary reinforcer (sometimes called conditioned reinforcer) is a neutral event that has go associated with a primary reinforcer through classical conditioning. An instance of a secondary reinforcer would exist the whistle given past an animal trainer, which has been associated over fourth dimension with the main reinforcer, food. An example of an everyday secondary reinforcer is money. We enjoy having money, not so much for the stimulus itself, but rather for the primary reinforcers (the things that money can purchase) with which it is associated.

Key Takeaways

- Edward Thorndike adult the law of effect: the principle that responses that create a typically pleasant outcome in a particular situation are more than probable to occur once again in a similar situation, whereas responses that produce a typically unpleasant upshot are less likely to occur over again in the situation.

- B. F. Skinner expanded on Thorndike's ideas to develop a set up of principles to explain operant workout.

- Positive reinforcement strengthens a response past presenting something that is typically pleasant after the response, whereas negative reinforcement strengthens a response by reducing or removing something that is typically unpleasant.

- Positive punishment weakens a response by presenting something typically unpleasant after the response, whereas negative punishment weakens a response by reducing or removing something that is typically pleasant.

- Reinforcement may be either partial or continuous. Partial reinforcement schedules are determined by whether the reinforcement is presented on the basis of the time that elapses betwixt reinforcements (interval) or on the basis of the number of responses that the organism engages in (ratio), and by whether the reinforcement occurs on a regular (stock-still) or unpredictable (variable) schedule.

- Complex behaviours may be created through shaping, the process of guiding an organism'south behaviour to the desired event through the apply of successive approximation to a terminal desired behaviour.

Exercises and Critical Thinking

- Requite an example from daily life of each of the following: positive reinforcement, negative reinforcement, positive penalisation, negative penalization.

- Consider the reinforcement techniques that y'all might use to train a dog to catch and think a Frisbee that y'all throw to it.

- Watch the post-obit two videos from current television shows. Can yous make up one's mind which learning procedures are being demonstrated?

- The Part: http://world wide web.intermission.com/usercontent/2009/11/the-office-altoid- experiment-1499823

- The Big Bang Theory [YouTube]: http://www.youtube.com/watch?v=JA96Fba-WHk

References

Cerella, J. (1980). The pigeon'south analysis of pictures.Pattern Recognition, 12, 1–half dozen.

Kassin, S. (2003). Essentials of psychology. Upper Saddle River, NJ: Prentice Hall. Retrieved from Essentials of Psychology Prentice Hall Companion Website: http://wps.prenhall.com/hss_kassin_essentials_1/fifteen/3933/1006917.cw/index.html

Porter, D., & Neuringer, A. (1984). Music discriminations by pigeons.Journal of Experimental Psychology: Animate being Behavior Processes, 10(2), 138–148.

Thorndike, E. L. (1898).Creature intelligence: An experimental report of the associative processes in animals. Washington, DC: American Psychological Association.

Thorndike, E. L. (1911).Creature intelligence: Experimental studies. New York, NY: Macmillan. Retrieved from http://world wide web.archive.org/details/animalintelligen00thor

Watanabe, S., Sakamoto, J., & Wakita, Thou. (1995). Pigeons' discrimination of painting by Monet and Picasso.Journal of the Experimental Analysis of Behaviour, 63(two), 165–174.

Image Attributions

Figure eight.5: "Skinner box" (http://en.wikipedia.org/wiki/File:Skinner_box_photo_02.jpg) is licensed nether the CC Past SA 3.0 license (http://creativecommons.org/licenses/past-sa/three.0/act.en). "Skinner box scheme" by Andreas1 (http://en.wikipedia.org/wiki/File:Skinner_box_scheme_01.png) is licensed under the CC By SA 3.0 license (http://creativecommons.org/licenses/by-sa/3.0/deed.en)

Effigy 8.6: Adapted from Kassin (2003).

Figure 8.seven: "Slot Machines in the Hard Rock Casino" by Ted Murpy (http://commons.wikimedia.org/wiki/File:HardRockCasinoSlotMachines.jpg) is licensed under CC BY 2.0. (http://creativecommons.org/licenses/by/2.0/human activity.en).

prestonthallactle2002.blogspot.com

Source: https://opentextbc.ca/introductiontopsychology/chapter/7-2-changing-behavior-through-reinforcement-and-punishment-operant-conditioning/